The Machine Stops

The Library in a Post-AI University

The Machine Stops: E. M. Forster 1909

Librarians were among the first to notice the rise of GenAI use by students. So it shouldn’t be a surprise that we’re also the first to see its decline.

I remember 3 years ago when a student brought me a list of AI-generated citations, most of them fabricated. Back then it was chatbots presenting as a strange hybrid of social media/search engine. This was a new and unsettling development, but the work felt familiar. Untangling the mess and figuring out what had gone wrong took time. We’d been through this before when Google and Wikipedia first appeared with their own confusions and anxieties. The task then was to learn how these tools actually worked and to figure out how well they either served, or undermined, our core mission of research and scholarship.

Within the university I believe that we notice these things first because the library is the place students turn when they have questions or problems with any strange new tool or method. They come to us with questions before they ask their instructors. Why? Because, although at my institution librarians are faculty, we teach but we don’t grade anyone and we don’t judge. We’re just present, in human form, one on one, waiting for someone to ask us something. We don’t even have to say out loud that the conversation is confidential, or that we aren’t your instructor or your parent.

This is by design. Libraries and librarians have carefully cultivated and protected their reputation for trust through every wave of technological change. No matter how virtual or tokenized information becomes, no matter how many paper books turn into glitchy eBooks, no matter how much of the physical collection moves to offsite storage, the library remains the place where someone can ask a simple question: “Can I trust this? (I’m asking because I trust you)”.

To put it in terms that AI enthusiasts can understand. If “library” was an AI app, you could say that our values and RLHF were designed to be “aligned” with an educational mission built on trust. That mission is the foundation model and everything else is a wrapper constructed around it. When Google and Wikipedia arrived, that was the vantage point from which we evaluated those tools. Did they align with our mission? And now we have GenAI. The view from the library is still I hope, a trusted view.

Holloway Reading Stand, ca. 1890

But here’s what I didn’t anticipate. That GenAI hype mania would take over everything with its electric practical magic. So, it’s an answer machine? A personal research assistant? A tool that can produce literature reviews, conduct “deep research,” write, edit, design slides, and, as we used to joke years ago, bake cookies if you prompt it correctly? The IT department was all in because after all, it worked. You put something in and something interesting happened. Language came out, or images, or infographics, or slide shows. Press a button and it produces something. Privacy is protected, the system functions, and everything seems fine.

No. Both administrators and IT fell victim to what’s called “The Illusion of Competence” that comes with AI use. If the marketing copy says that this is a miraculous new “research assistant” and then you put something in and quickly get something out that looks like research, something that maybe sounds somewhat too smooth, yet profound, enough to tickle your brain, then you are doing *actual research*. Put more stuff in and that’s “deep research”. Again, no. These groups are very convinced that they are on the right path but it may be that they are just not as immune to “bureaucratic sycophancy” as they suppose.

I’ve written about this before so why go over old ground? First, librarians have resisted this view of GenAI from both IT and the administration mostly successfully, although it’s been dicey at times. A crucial difference with GenAI this time around is that we never paid for or endorsed Google OG or Wikipedia. They were free and we were free to evaluate them neutrally and critically. We never imported the marketing copy for these commercial tools wholesale and placed it all over our university’s website. We weren’t pushed to adopt these tools in more and less subtle ways.

The pressure to be less critical of these tools was enormous. Librarians (myself included, myself in particular) were asked to step back, and we did. We stepped back from interacting with other departments like Teaching and Learning, IT, and others. No negative public comments and no collaboration. There was a bigger picture here, they said. There were larger issues at stake, they said. We were luddites.

Step back. I don’t understand how less collaboration rather than more possibly benefits anyone, but here we are. The view from the library became a thing apart.

Our criticism was never as absolute as it might have appeared. We’ve also been deeply curious and have tested and experimented with all of it. But with our skeptical approach to adoption and our caution I hope we’ve preserved that core quality of trust through this latest change. I think it was worth it.

Now the tide is going out. If GenAI was a wave then it’s receding. After several years of intensively evaluating the GenAI tools that have been purchased by my university, I realized that it’s likely that very few students are actually using them. The AI tsunami started well outside of the university with students using free and compelling conversational chatbots and there’s no evidence that they’ve switched to university sponsored tools.

In fact, students may be avoiding institutional tools because they distrust them. They don’t trust that their use of institutionally provided AI tools won’t be monitored or linked back to them if they use AI inappropriately in a class. They prefer to use their own AI, and that largely remains ChatGPT. There’s an excellent analysis of why we should be skeptical of institutional AI tools here.

More importantly, the free chatbots like ChatGPT are now failing. Students used free AI chatbots for schoolwork because schoolwork was part of their daily lives but it was not the only, or even most important, part of their lives. Now they are unsubscribing in large numbers.

The backlash is strong and fueled by multiple concerns. The enshittification and manipulative nature of ChatGPT, Environmental concerns, political concerns, and the idea (that’s always been there) that GenAI is not worth paying for. And it can’t be free forever because OpenAI and all the others are running out of money and running out of options.

So what now? We were never at the center of all of this, the students were. As GenAI becomes less relevant to their daily lives we’re still here trying to justify the massive amount of time, money and energy that we’ve spent out of an excess of FOMO. I’m watching the academic use cases and dreams get smaller and smaller. Did we really spend hundreds of hours and thousands and thousand of dollars so that we can sort and summarize our emails? Clean up syllabi? Write website copy?*

We can slow down. AI isn’t going anywhere but it’s likely to continue to retreat into more narrowly focused, discipline-specific uses. Even in the field of library science there are some fascinating AI developments in the area of search that can be examined and discussed, critically and slowly.

Lastly, to IT, administrators and others: Let the library back in. We’ve been stewards of the trust that the entire academy is built on. It would help our students; it would help us all.

All new tech developments evolve and they pass. Listen to the students and follow their lead. Whatever changes, we’re here. Ask us a question.

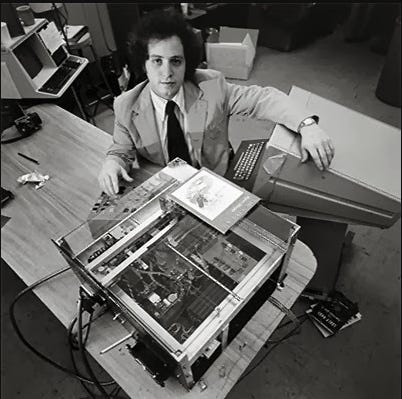

Think of the efficiency of the thing!” Professor Entwhistle was really warming up. “Think of the time saved! You assign a student a bibliography of fifty books. He runs them through the machine comfortably in a weekend. And on Monday morning he turns in a certificate from the machine. Everything has been conscientiously read!”

“Yes, but the student won’t remember what he has read!”

“He doesn’t remember what he reads now.”