The Homework Machine Year Three

No, It Still Doesn’t Feel Like Learning

Every generation projects its deepest anxieties onto the newest tech. Yesterday, it was the amorality of the mad scientist; today, AI “experts” think that GenAI, when it becomes AGI, might destroy us, or save us.

Whether AI is cast as an existential menace, or a fantasy of Men Like Gods, promising salvation and a shining utopia (with the usual elites conveniently at the helm), the billionaire tech bros are busy burning through the planet’s resources while imagining GenAI as both monster and messiah, destroyer and savior of humanity. These are really two side of the same coin.

But I’m just a librarian and educator and I can’t wrap my mind around every AI doomsday scenario in circulation right now. Looking back at the past three years I’ll limit myself to the more scaled-down visions of my corner of academia.

In higher education, we can’t escape some of these tropes about GenAI. Especially the idea that this technology is all-powerful and getting more powerful every day; that it’s inevitable and that we can’t miss out, or be left behind.

But there’s something else, something very specific to us in the academy. We have our own fixations and mental models. And what was the first thing that we imagined and feared when confronted with GenAI three years ago?

From the beginning, the most resilient of pedagogical myths attached itself to the conversation around AI and is still present, albeit dressed up in formal language, in academic language, with early commentary feeding later iterations. The idea that all students everywhere are lazy, and that they cheat, all the time, and that they do so with whatever tools they have at hand.

Like everyone else, we filled GenAI tools with our own fears. Not consciously of course, but all of the angst and suspicion that colors our relationships with students was activated (mixed with other emotions, always, but the dread and jittery expectations leftover from our own student days are some of the more dominant forces in these relationships).

AI is For Cheaters

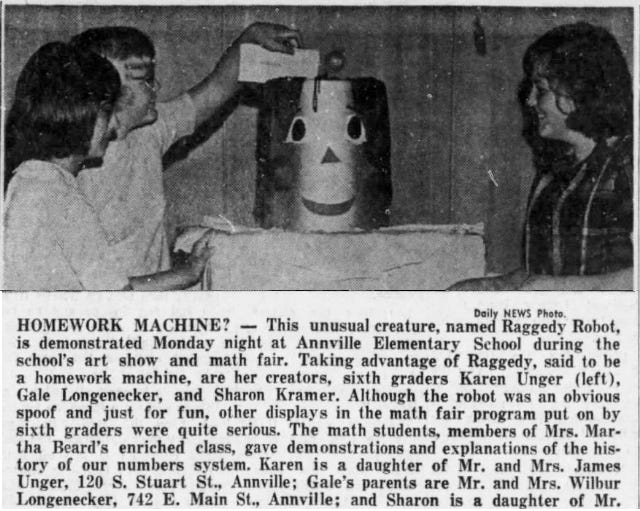

In an essay on AI in the university Katherine Schmidt outlines many of the ways that GenAI is diminishing the academic experience. AI is for Losers she says, and our students are the newest and least powerful losers. This is true for all kinds of reasons, but one of the most destructive is that we cast AI as a super-charged “homework machine”, and that this is what students everywhere were just waiting for because it was the ultimate easy way out. AI reactivated and reinforced the idea of the lazy, cheating student and we can’t seem to let go of that.

Some students will cheat as they’ve always done, but there’s no evidence that AI is making them cheat more.

As for the lazy part, we’ve made this mistake before with Google (OG). Remember the idea that Google making us stupid from 2008? Google was going to make us dumb and students would use it and it would make them dumb and the whole thing would to go to hell.

In the end, we all did use Google, and Wikipedia, we still do and we are all still here.

When students are asked now if they use AI they mostly say yes. But does that mean that they’re using ChatGPT and other AI tools to consciously cheat on schoolwork and classroom assignments?

Speaking for my own university, we don’t yet have a coherent definition of what it means to use AI to cheat, so how can the students know where the line is? Universities are sending wildly mixed messages, my own included. We now have AI-free and device-free classrooms and spaces, while at the same time subscribing to, and paying for, AI tools for students use, and promoting them heavily. Students are caught between ‘AI gets you in trouble’ and ‘AI is the future’. Which is it?

How are Students Really Using GenAI?

OpenAI recently released data on how people (all people, not just students) are using its consumer product ChatGPT. A surprisingly small percentage of use was categorized as (non-personal) writing, editing, data analysis, or other tasks that could be interpreted as schoolwork. Search was big, which affects research activities. But the majority of use landed in categories labeled as guidance, relationships (?), self-expression, or media creation.

Some of these categories are a little … odd (although I like the classification “greetings and chit-chat” myself). It’s likely that these categories are a little fuzzy on purpose to minimize anything that carries a hint of emotional involvement. (considering that OpenAI is currently being sued for that very reason).

Another, independent study of How People Are Really Using Gen AI in 2025 found that “therapy and companionship”, “organizing my life,” and “finding purpose” are the three most prevalent uses of ChatGPT in 2025. Homework doesn’t make the top of the list.

ChatGPT has 782 million weekly active users (as of this writing) with the majority of users, presumably young people, *not* using it to cheat. Probably. Depending on how you define cheating, which we have not.

As for “greetings, chitchat, and relationships”, AI is there. China’s “companion” AI service Xiaoice has 660 million users , 30 million are using Replika.ai another companion app, and there are at least 20 million users of Character.ai.

So, yes students are using AI, all the time. For advice, inspiration, companionship, creativity and more. Possibly not so much for homework. There are some statistics showing ChatGPT use going up during the school year, but that’s a for single product, and who’s to say at this point if it’s teachers or students chatting with the bots, or what they are asking them. Homework help? Moral support? Friend-zone study break? Who knows?

Despite having three long years to try and understand all of this, we can’t let go of the zombie idea of the student slacker - even if when we layer a veneer of compassion over this persistent fallacy.

No, It Doesn’t Feel Like Learning

There have been attempts to justify this outdated archetype without “blaming” students (for what, being themselves?). A recent article posited that students are just victims of an overwhelming “cognitive bias” that practically compels them to use the cheating machine that is AI.

The faculty are sad it seems, and instructors need to find ways to discourage students from using GenAI (chatbots in particular) in “lazy and harmful” ways. Because the students cannot be expected to “forgo easy shortcuts”. AI is pleasurable but harmful. There is a cognitive bias for this, you see.

Multiple studies explaining this cognitive bias are cited that compare *passive* learning with *active* learning and conclude that being fed information and answers feels really good to students and “feels like” learning to them. Maybe that’s true (although I find it really strange that in several of the studies cited the term “actual learning” is used repeatedly with no clear definition).

One educator quoted in the article, Joss Fong cites a study where a (passive) lecture experience was compared to a small (active) study group. The other studies also compare different methods of passive learning, like sitting in a lecture hall or watching educational videos, to active learning, which usually involved in-person interaction and discussion. In every case the passive method “felt more like learning”. *The passive learning methods were then equated with using GenAI.*

What’s Wrong With This Picture?

What’s wrong? Even if we actually knew what learning “felt like” or what “actual learning” was, this line of argument is deeply flawed. Why? Because GenAI and AI chatbots are not passive technologies.

GenAI tutors and tools are commercial products and they’re designed, on purpose, to be super-engagement tools in the mold of social media apps. They are created to stimulate interaction in order to keep users on the apps, not to foster passivity.

These tools are *not like passive lectures or videos* at all. Our students are deeply, and *actively* engaged with these technologies.

To quote Katherine Schmidt (again):

“… anthropomorphic mimicry [is] embedded into the very fabric of generative AI … The people who developed this technology mean to make lots of money off of people mistaking it for human. The younger we can force people into this mistake, the more reliant they will be upon it.

We see this in the rapid adoption by students of conversational chatbots for things like feedback, inspiration, search, and social support. This is very different from their use of more utilitarian online study aids, even those that incorporate AI features.

When GenAI is used directly and consciously used for classwork, it’s still a new species of tool. Do teens and college students spend their spare time hanging out on Grammarly? They do not. Bafflingly, GenAi in education has sometimes been compared to the introduction of the calculator. But I’ve never seen anyone talk to, or ask for inspiration, or anything similar, from their calculator. Can we not see the difference?

Our students are emerging from a locked-down life trailing clouds of memes and shaped by endlessly mutating trends that emerge from the black-box that is AI. The “stickiness” is baked in, and GenAI is being integrated into the parasocial life of young people with the lines between school, work, and social interaction quickly dissolving.

Natives of social media environments don’t see these platforms as tools in the way that we (older generations) do. These aren’t tools with a predicable cause and effect function; something where you just plug in the right data and you will get a right answer. Students are not looking for right answers, most of the time.

The perfect Homework Machine is our fantasy, not our students’. When we judge AI tools, and when we judge students for using them, we’re operating within a paradigm that is decades out of date. These tools are interaction machines and emotional support bots.

GenAI doesn’t feel like learning, it feels like life.

This, combined with our old fears; that tech’s endgame is to lull us into a deadly passivity; that Ai, like Google before it will rot our brains; that students have to cheat (it is their nature) and we are going down the wrong path.

What To Do?

· Stop calling everything “AI”. AI is a marketing term, it means nothing, it’s applied to everything nowadays. Try being more specific when in an instructional setting. Talk about “LLM text generator tools”, or LLM created output. This can sometimes lead to a discussion of what LLMs are and how they work.

· Don’t “ban AI”. See above. AI is poorly defined, applied to everything, and is also now everywhere. If you try to ban it students won’t know what you mean. You will barely know what you mean. You will not succeed in eliminating AI from your classroom.

· Make students show their work. If a student used an AI enhanced tool in any part of their work have them write down when and how and which tool you used. I’m actually interested. It works in math, but even in a humanities class there is ideation, research, draft after draft, final product. As a librarian I do the middle part, research and ideation, so I know it is possible to do this in all fields.

· Universities. Don’t pay Google for their “AI Research Assistants” (I’m looking at you Gemini and NotebookLM) and promote them as “Research Assistants”. And don’t do that without talking to a librarian. And don’t do that while at the same time banning “AI” everywhere. And promoting these tools at the same time. I mean, what?